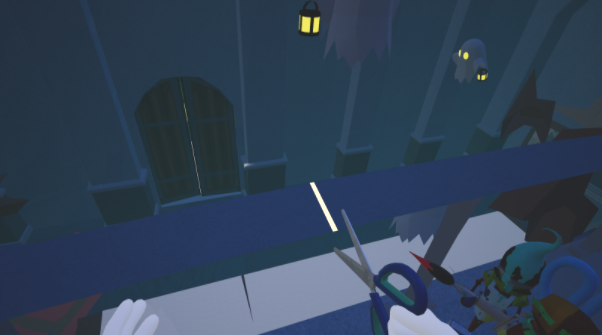

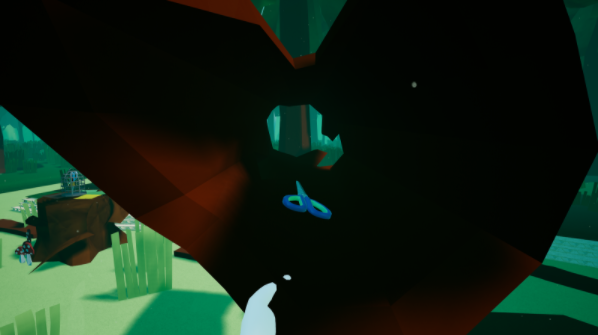

Lost Pages is a VR sandbox-puzzler taking place in a world made entirely out of paper. To find the missing scrap sprites the player must cut, glue and draw their way through a thin world.

During their adventures the player will not travel alone though. They will be accompanied by all the scraps that have already been found. They will hold the player's tools, make sure nothing of value gets lost and are all around a lively bunch.

Development time: 3 months (April 2017-June 2017)

Roles: Programming, level layout, lighting, audio coordination

Engine used: Unreal Engine 4

Language: C++ (and some Blueprints)

Gameplay Summary

The game starts with the player having nothing more than their bare hands. Soon they will find their first tool and from there on its total creative freedom.Everything inside the play area is interactive. You can cut down trees, grass, rocks, candlestands, railings and every other object you can find. You can also glue the pieces together or draw on them and erase everything again. Scraps are hidden in some obscure places so just go around and do everything that comes to your mind.

Sometimes a puzzle requires you to draw out a shape, sometimes it consists of building a fishing rod, at other times you have to build a structure and from time to time you have to look very closely to find a missing puzzle piece. Currently there are roughly 15 puzzles in the game.

You can try out a version made for the FRAVR expo here. (You will need an HTC Vive)

The Team behind Lost Pages:

Environment Art and Rigging by Julia Weigel

Character Concepts, Environment Art and Animation by Patrycja Krasinski

Character Art and Texturing by Liana Heinrich

Sound and Music by Philipp Hagmann and Damiano Picci

Brush Mechanic

From a technical point of view Lost Pages had been quite a challenge. It features some finicky mechanics like the brush with its ability to draw on any surface, the scissors which can cut any object in half and the whole paper framework which wraps these mechanics and makes them work together.The brush is drawing by stretching a quad strip behind the tip. Once activated a single initial vertex is created and as soon as the player moves a certain distance away from this point or turns the brush at a sharp angle an edge is created that connects to the previous vertices. This way a continuous smooth line is formed. There are however some cases that needed some extra work:

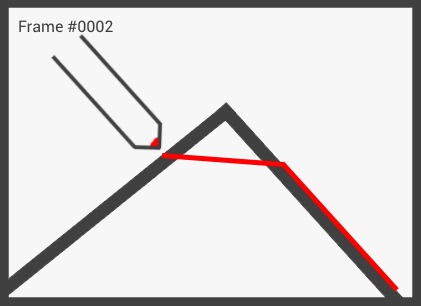

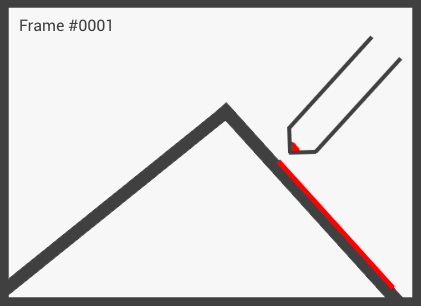

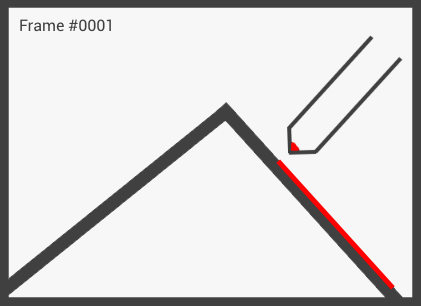

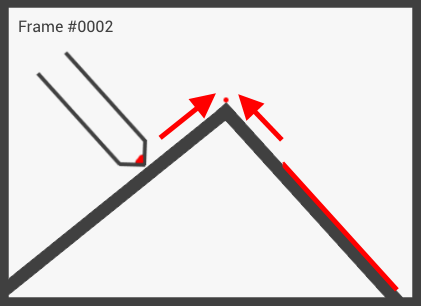

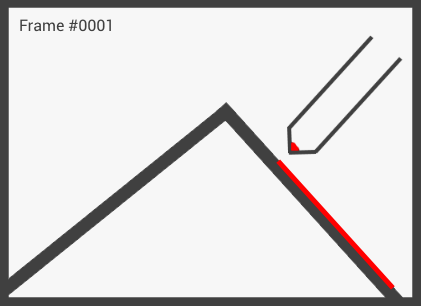

When drawing the quad strictly from one location to the next each frame drawing over an edge will result in the stroke going through the mesh instead making it invisible from the outside and making it seem the stroke is interrupted. To dampen this problem there are two methods. The first one detects that a different face has been hit and simply subsamples between the last and the current position:

This is actually quite an naive approach as we can get the cross section between the edge and an imaginary plane going from the current to the last position with the normal facing towards (or away from) the camera. This way we can connect the last position to the calculated point and then connect that one to the current position:

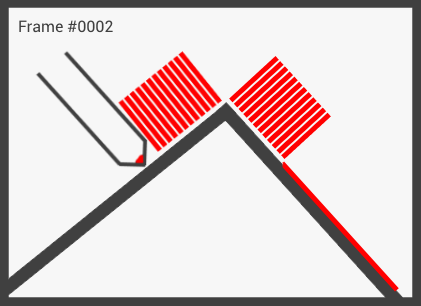

The problem with this method however is that it only works for two directly connected faces. If there is another face inbetween we run into the same problem we actually wanted to solve. In the end I used a mixture of both methods to solve the problem. I subsample the area inbetween the two locations and find the intersection points with all hit edges. I then connect them and therefore form a continuous line.

The varying thickness of the brush also proofed to be a problem in some cases. When drawing on a thin edge the thickness of the brush would go beyond the underlying mesh resulting in either hovered strokes or, in the reverse case, strokes clipping into other geometry which is arguably not that bad as the first case but still existant.

The brute-force approach taken in the end was to cast a ray downwards from the 'ideal' edge vertex location and if nothing was hit as it would be the case in a thin mesh I would cast another ray slightly below this position back towards the stroke's current centered location. This way I could detect the thickness of the mesh and make it that strokes fit the underlying geometry.

Glue & Eraser Mechanic

The glue and the eraser were the simplest tools to implement. These two also were the features that differed the most from their initial sketch (although they were still pretty close to the original idea).

The glue applies small glue blobs to surfaces which connect two actors together when they come in contact with each other. This is done using a simple physics constraint between the two. When glued together actors do not collide with each other anymore. This is to reduce jittering when flailing around two glued actors with your hands. We encountered no downsides to this method so I just stuck with it.

Also every glue connection beyond the first one does not actually constrain the two actors. We had many problems when trying to glue two actors together multiple times and ultimately it was for the better that I only made the first connection valid. We have only a handful of concave objects where this could theoretically become apparent but so far no one even noticed it and it 'solved' a big problem rather smoothly.

The aspect where the final glue logic differed from its initial sketch was the way it is applied to surfaces. Initially we planned the glue stick to draw on surfaces much like the brush so that a line of glue would be formed on the mesh. For demonstration purposes I implemented an application mechanic which simply spawned the aforementioned blob, not a glue line. By the time I finished the brush mechanic though both the team and our testers (which we swapped out regularly) grew so accustomed to the glue blob that we simply kept it in. A nice bonus of this method is that with this all tools differ at least a bit in their application. The brush has to be moved, the glue has to be tapped, the eraser needs to touch and the scissor needs to be let go of.

The eraser was similar to the brush in how it was used. You pressed it against a surface and moved it around. This was fine in theory and in our earlier prototype but later on it became apparent that it was actually hindering gameplay a lot. Players would crawl on the floor slowly erasing each stroke they drew when all they wanted to do was to erase everything at once. Similar to TiltBrush where the usage of the eraser removes the complete stroke I then implemented a much simpler mechanic where the mere touch of the eraser immediately removed the completely line. Erasing became much more user-friendly afterwards. Although the work put into the more precise eraser mechanic was wasted now it ultimately resulted in a better experience.

Scissors Mechanic

For the scissors it was not difficult to come up with a solution. What was difficult though was the eradication of bugs as the scissors was deeply connected to the paper framework and thus quite error-prone.

The basic process of using the scissors goes like this:

- Create a plane at the location of the scissors and let its normal vector be the scissors right vector

- Trace for an object in front of the scissors

- Create two separate actors that will be filled with the sliced data of the hit actor

-

Iterate over all sections of a mesh. A section is an Unreal term for primitives inside a mesh that share a material. Compare each section to the plane to determine

on which side the section lies. If it is located completely on either one of the sides just add it directly to one of the created halves. If it clips the slice plane

though each vertex needs to be iterated and assigned to two newly created sections. Edges that intersect with the plane spawn a new vertex at the intersection point with interpolated

values.

Slice the collision meshes similar to this. - Slice the strokes on the actor and assign them to the right half, slice the glue connections and notify all other actors that may have an interest in such an event.

Unreal Engine already has very nice functionality for slicing a mesh at runtime but I still had to add a few additional features like vertex color support and of course all the 'logical' slicing like keeping the glue connections on the correct half post-slicing or cutting the drawn strokes in two as well. Originally, we aimed for arbitrary cutting not constrained to a plane but due to time constraints we settled on this simplified mechanic.

A feature not present during the semester presentation is multi-threaded cutting which took all the calculations and executed it on another thread. Depending on the complexity of the sliced mesh it is either cut directly on the game thread which results in immediate slicing or it is transfered to another thread which results in slicing after 3 to 4 frames. The second approach trades a bit of user feedback for a smoother framerate. We also drown the four frames with a bunch of sounds and a fading visual effect which hides the delay completely.

Then there was the problem of how to determine whether a sliced actor half should be made dynamic (i.e. physically simulated) or whether it should stay static. Two examples:

The difference between the two actors is that the railing has its anchor points defined. Every paper actor can define points that connect it to some completely static object like the ground or the walls. When this actor is now cut in two the anchor points are split among the halves as well. If one half contains at least one anchor point it is marked as static otherwise it is made dynamic. The railing for example has its anchor points defined here:

Last but not least there was the question of collision and how complex we were going to make it. The inner hollow area of a cut tree trunk for example is so big that any player would expect it to be non-blocking. Many of the more experienced players actually tried to put something inside the trunk to see whether it was completely convex or not. Due to the short semester we were not able to build custom-tailored convex hulls which is why we resorted to the V-HACD library built into Unreal's mesh editor. For some of the more complex meshes like the mentioned trunk we went up to 30 simple convex volumes to approximate the trunk's shape as close as possible. The results of the convex hull decomposition were decent enough and it allowed us to focus more on actual asset production.

Scraps

Next there were the randomly shuffled scraps. From a technical point of view they were simple. Pick random parts, assemble them, add a random voice and you got a randomized character. The difficult part was to export each set piece so that the arms, legs and head would stay connected to the torso. They all had to trigger their animations at the same time while being completely different components and they should never go out of sync so that they appear as one entity. I had to tackle this feature many, many times until everything finally worked. There are a total of 20 scrap pieces (two arms, one leg pair, one torso and one head) which all had to be included into the concurrent animation system. Setting a random voice was nothing more than sending a parameter to WWise which played events such as screaming using said parameter.

The scrap swarm AI was quite straightforward but debugging it took a lot of standing up and down. The scraps avoid the player camera on a horizontal base.

Once the player comes to near to a scrap it moves the distance between the two in direction from the player to the scrap. Movement takes place using a simple

distance-dependent interpolation function. If the player camera is moving too far away from a scrap it gets tethered to the player and will move a minimum

distance towards them.

When a scrap is trying to evade the player camera but would hit a wall on the way away from the player it instead moves to the other side of the player therefore

preventing getting stuck between the wall and the player.

The AI had to be simple as we needed to support a lot of scraps at a time but it does a good job. Most of the players treated the scraps like a bunch of floating dogs

and threw away their tools over and over again to watch them fetch the tools.

Sound & WWise

Lastly some words about the sound. Since we worked with the same sound designers as with Stockfall we already knew our way around WWise and its intricacies. What was new though was the addition of binaural sound. We used the Auro Headphone plugin. It required some modifications of the Unreal WWise plugin that is shipped with the WWise launcher that were not as intuitive as they could have been. There was noticeably less support for issues related to Unreal and WWise compared to, say, Unity and Wwise.

Beyond that WWise was the root of some issues when packaging the game as its declaration of a few UFUNCTIONs causes the Unreal packaging tool to crash. Other teams during the semester had similar problems with the software and even ditched it altogether in some cases. If my team and me did not have the experience from our previous project we probably would have joined the others.

WWise is a great tool and it offers a lot of fine control over sounds and their interaction with the environment but its integration into Unreal and Perforce workflows caused us some trouble.